|

Software Development is changing. And so is GitLab. Learn how. (Sponsored)

On June 10, GitLab Transcend streams live from London with an agenda built for practitioners like you. You can expect an agenda that’s full of keyboard moments with live demos of Duo Agent Platform, agentic AI use cases from your peers, and The Developer Show hosted live by Senior Developer Advocate, Colleen Lake.

GitLab Transcend streams live from London on June 10 with regional replays for APAC and AMER on June 11.

Register today. It’s free to register and totally virtual. Come see intelligent orchestration, now with context.

This week’s system design refresher:

Prompt Injection, Clearly Explained (Youtube video)

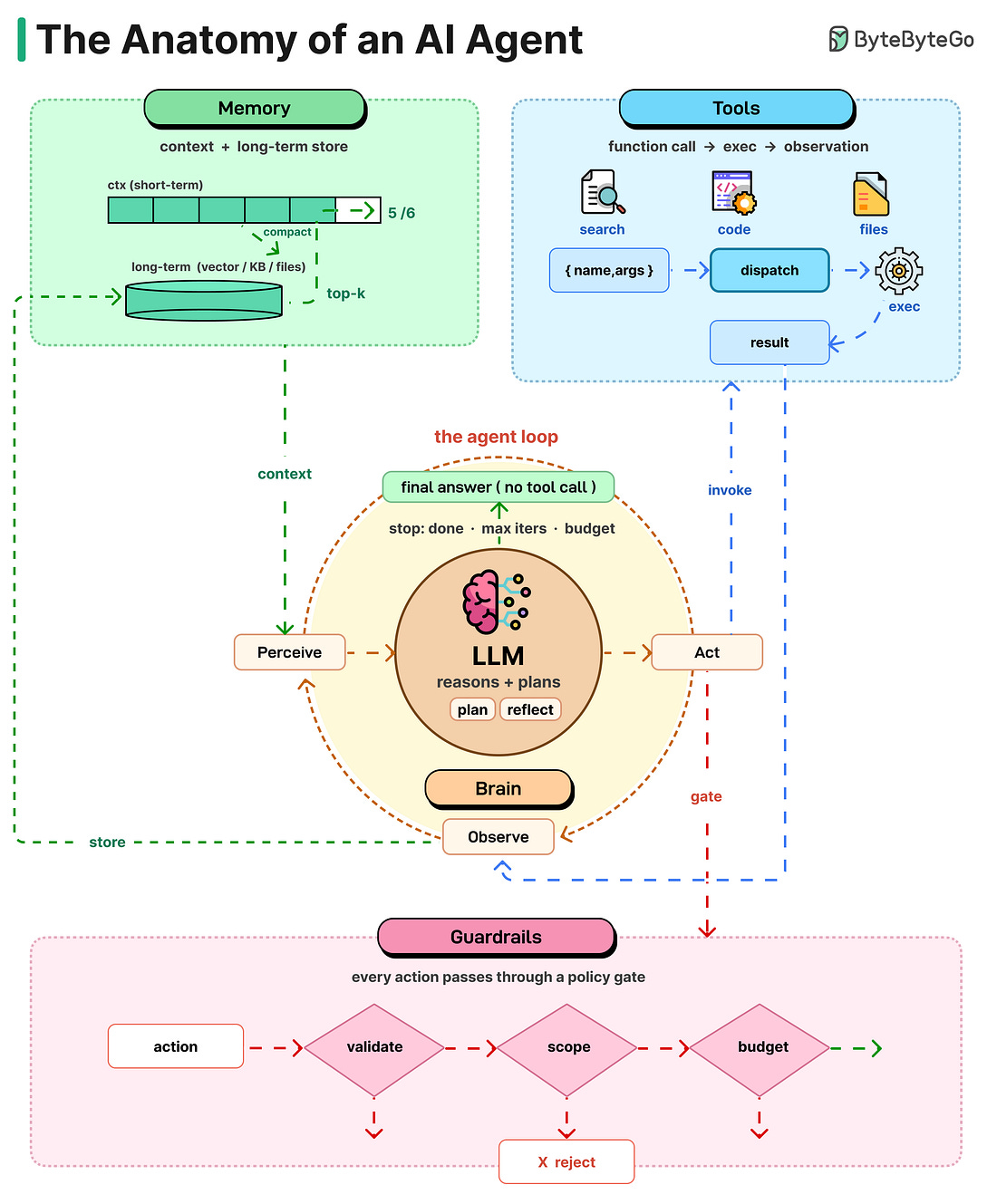

The Anatomy of an AI Agent

REST vs GraphQL vs gRPC

If Claude Code is a burger...

git fetch vs git pull vs git pull —rebase

Prompt Injection, Clearly Explained

The Anatomy of an AI Agent

An AI agent can be thought of as a simple While-loop.

It uses an LLM to select an action, executes that action, evaluates the result, and repeats the process until the task is complete. Let’s take a closer look at each of these components:

Brain: The LLM is the core. It reads the situation, thinks, and decides what to do next. The big shift from chatbot to agent: the model isn't writing text anymore, it's making choices.

Planning: Hard tasks need more than one step. Agents break them down using methods like Chain of Thought (think step by step), Tree of Thoughts (try options, pick the best), or

Reflexion (learn from mistakes and retry). Planning turns a fuzzy goal into clear actions.Tools: An LLM without tools is a brain in a jar. Tools are functions the model can call, like web search, code execution, APIs, files, or browsers (often using the MCP standard). The model requests a tool, the system runs it, and the result comes back.

Memory: Without memory, every turn starts from zero. Short-term memory is the context window. Long-term memory lives in vector stores, files, and knowledge bases. When the window fills up, agents summarize old turns and carry the summary forward.

Loop: All four pieces work together in a cycle. The agent looks at the current state, decides what to do, uses a tool, sees the result, and repeats. It keeps going until it gives a final answer.

Guardrails: Not strictly anatomy, but important. Sandboxing, human checks, token limits, output validation, and scope limits keep autonomy from turning into expensive chaos. The more autonomy you give, the more these matter.

Over to you: when you build an agent, which of these five takes the most work to get right?

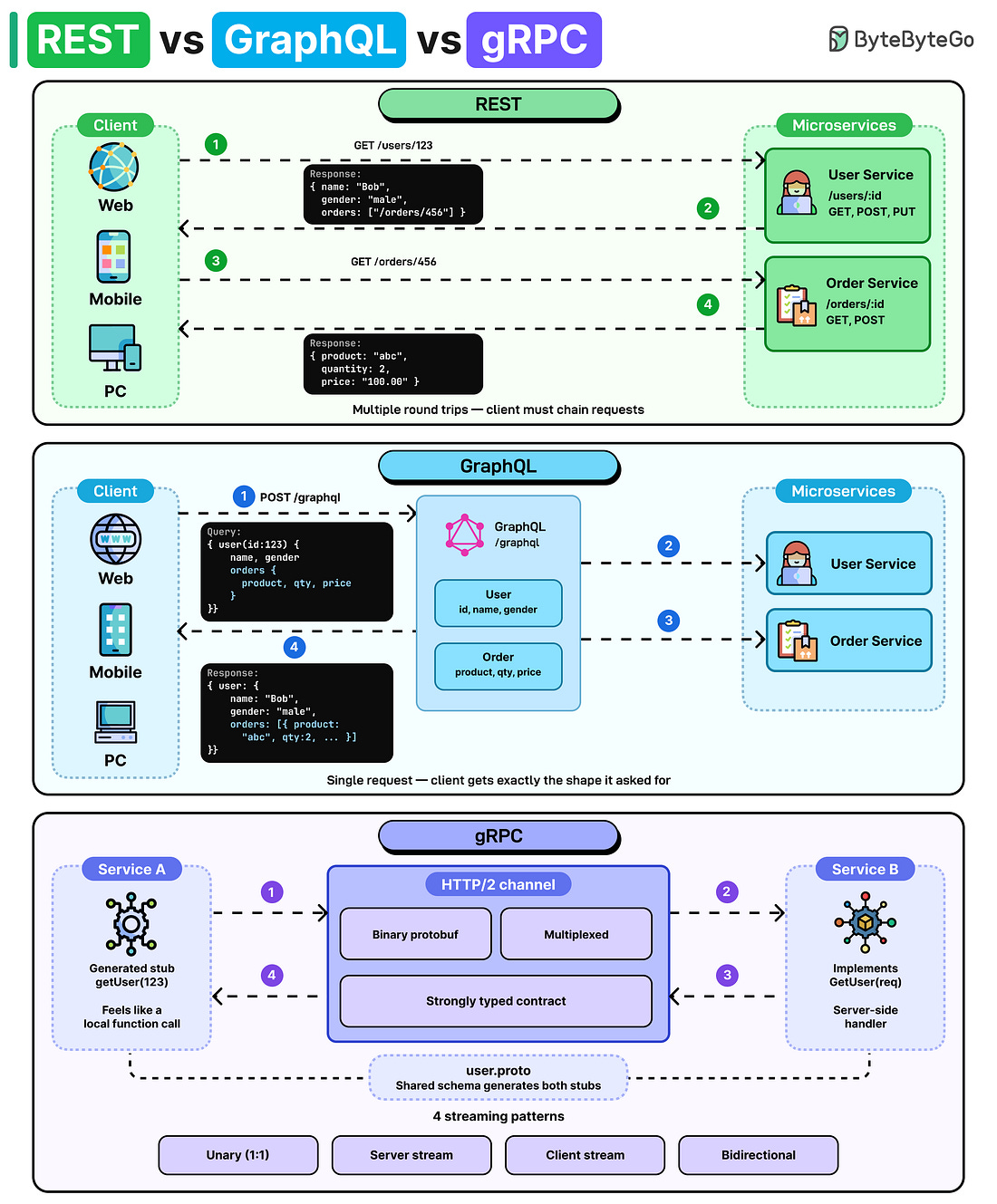

REST vs GraphQL vs gRPC

REST, GraphQL, and gRPC are three distinct approaches to designing APIs. Each offers a different trade-off between simplicity, performance, and flexibility.

REST: Each URL represents a resource, and you use standard HTTP verbs (GET, POST, PUT, DELETE) to act on it. Simple and universal, but it often requires multiple requests to assemble related data.

Trade-offs: Easy to learn, cache-friendly, and works with any HTTP client, but tends to over-fetch or under-fetch data, leading to chatty clients and version drift as endpoints proliferate.GraphQL: The client sends a query describing exactly the data shape it needs, and the server returns precisely that data through a single endpoint.

Trade-offs: Eliminates over-fetching and lets frontends evolve independently, but shifts complexity to the server (resolvers, N+1 queries), complicates caching, and makes rate-limiting and query-cost analysis harder.gRPC: Services communicate via strongly-typed method calls over HTTP/2 using compact binary (protobuf) encoding, making it ideal for fast, low-latency service-to-service communication with built-in streaming support.