| | In this edition, why the US government needs to go back to spending on R&D, and one of the hottest e͏ ͏ ͏ ͏ ͏ ͏ |

| |  | Technology |  |

| |

|

- The hottest ticket at GTC

- Where AI answers come from

- A new tech bill VCs like

- Microsoft v. OpenAI?

- Social media safety unites

The US government needs to reprioritize R&D, and AI’s explosive phase is only just beginning. |

|

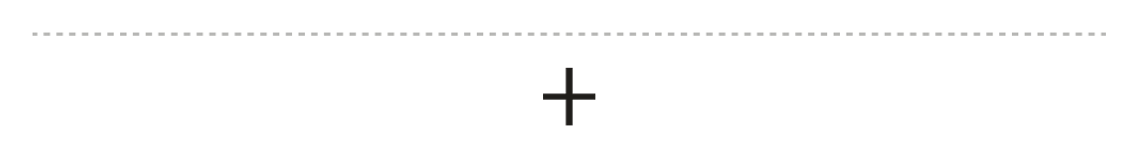

After Meta’s reckoning with Horizon World, its virtual-reality platform, a chorus of “I told you so”s rang out from critics of Mark Zuckerberg’s more than $70 billion Metaverse bet — and, of course, his choice to rename his company after it. It’s the right call, but the wrong takeaway. Virtual reality was a dead end for Meta, but not because Zuckerberg’s vision of the future is wrong. It’s because the technology needed to make it possible is too far off. Virtual reality headsets still make many users physically ill if they keep them on for too long or use poorly built apps. To solve this problem, we’ll need faster computer processors, as well as scientific breakthroughs in optics, sensors, and batteries. This is an issue, not just for Meta but for future economic growth and technical progress. Scientific breakthroughs aren’t keeping up with our collective imagination and the availability of capital to fund new innovation. VR is just one example. US spending on research and development as a percentage of GDP dropped from a high of nearly 2% during the Cold War to about 0.6% today. The rewards from those Cold War breakthroughs — semiconductors, lithium-ion batteries, and software automation — are starting to peter out. Moore’s law is fading away as an organizing principle and we’re trying to make up for it by making bigger and bigger computer networks. But even manufacturing enough chips is proving challenging.  The world is now facing major shortages of computer memory and old-fashioned, spinning hard drives like the ones you would slide into your computer in the mid-1990s. Nobody has come up with anything better to help respond to AI-driven demand and the incredible economic opportunity it represents. Even the US military, which helped develop many of the scientific advances that drive the economy today, is having trouble fighting off drones made from off-the-shelf parts, and is hiring former investment bankers to help procure better technology, as my colleague Liz Hoffman scooped. But if there’s any business the US government needs to get into right now, it’s funding for basic scientific research. We have plenty of capitalism — and capital. We have plenty of entrepreneurs willing to innovate. What we’re getting low on is scientific breakthroughs. |

|

Nvidia’s ‘Claw Bar’ was a pricey wakeup call |

Nvidia’s DGX Spark. Courtesy of Nvidia. Nvidia’s DGX Spark. Courtesy of Nvidia.One of the hottest (literally) events at Nvidia’s big conference this year was the “Claw Bar.” San Jose conference goers lined up in 90-degree heat to learn how to use OpenClaw, the popular agent system Nvidia CEO Jensen Huang praised all week. I lined up too, next to a tech retiree who wanted to set up agents to juice his personal investments by getting real-time updates on new property foreclosures and city projects. An Nvidia employee working the booth wanted to set up an agent to pay his parking tickets. It turned out the demo was more of a sales pitch for Nvidia’s DGX Spark, a desktop that runs AI models locally, than an OpenClaw how-to. The Nvidia engineer explained how it’s difficult to run anything much more complex than a weather check without something like DGX Spark, which was conveniently for sale and parked nearby. “Four hundred?” The retiree inquired about the price. “Four thousand,” the Nvidia engineer said. While Nvidia says people are ponying up for the technology, it reminded me of a joke going around in Silicon Valley about how it costs $87 to have OpenClaw make a restaurant reservation, given stubbornly high token prices. A joke, but also probably not far off. — Rachyl Jones |

|

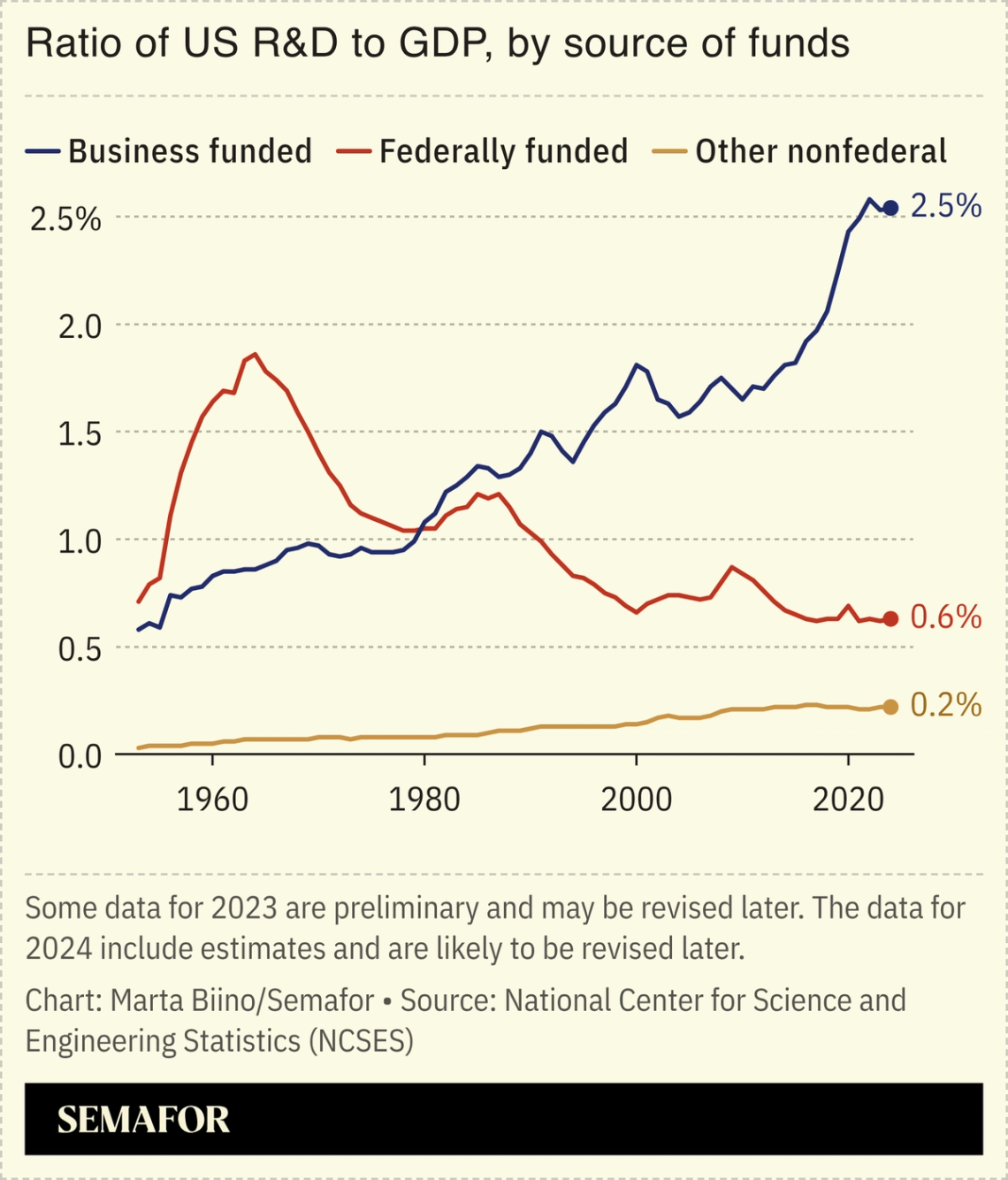

Chatbots are learning from Reddit and LinkedIn |

Turns out what the growing class of LinkedInfluencers say sort of matters. Top AI models are mostly citing Reddit and LinkedIn when delivering answers and content to users, according to a recent study by AI marketing company Semrush. For marketers, this means posts from a company and its employees on social media are directly influencing how the brand appears in AI results. And unlike the internet norms of the last decade, virality doesn’t drive uptake. For consumers, there’s a different takeaway. Reddit, LinkedIn, and many of the other domains most often cited in AI aren’t exactly the pinnacle of objective, quality information. It’s a reminder to take AI recommendations with a grain of salt, knowing ChatGPT’s advice on how to ask for a raise or break up with a significant other can just be an amalgamation of Redditors’ opinions — the good, the bad, and the ugly. — Rachyl Jones |

|

Apple’s fight against vibe coding |

Apple’s headquarters in Cupertino. Loren Elliott/Reuters. Apple’s headquarters in Cupertino. Loren Elliott/Reuters.California state senator Scott Wiener, who’s drawn the ire of Silicon Valley for his bills regulating AI, has come out with a new bill that some venture capitalists are actually supporting. The so-called Blocking Anticompetitive Self-preferencing by Entrenched Dominant Platforms Act (BASED Act) goes after big tech companies that are stomping on young startups to protect their home turf. But the A in BASED might as well have stood for Apple. The same day the bill was announced, The Information revealed how Apple was secretly blocking vibe-coding startups like Replit and Vibecode from updating their apps. Vibe coding poses a threat to Apple because it lowers the barrier to entry to get into its prized app store, and has contributed to a flood of new iOS apps. Vibe coding could one day threaten walled gardens like Apple’s, because it allows anybody to replicate complex software that for decades only big tech companies have been able to provide. — Reed Albergotti |

|

When the business world moves, these are the people turning the wheel. Introducing The CEO Signal, a new video and audio series hosted by Penny Pritzker, founder and chairman of PSP Partners and former US Secretary of Commerce, and Andrew Edgecliffe-Johnson, CEO editor at Semafor. Episodes are released every two weeks. Building on The CEO Signal newsletter, the essential briefing read by the world’s top chief executives, the show brings that perspective to revealing conversations with the people steering the world’s biggest companies. In the debut episode, Andrew and Penny sit down with Starbucks CEO Brian Niccol. Now 18 months into his tenure, Niccol has launched his “Back to Starbucks” campaign — an effort to revive the brand’s classic coffeehouse feel, including baristas writing names on cups again. In the conversation, Niccol explains how he’s rallying Starbucks’ global workforce behind one of corporate America’s most closely watched turnarounds — and working to restore momentum to one of the world’s most recognizable brands. Niccol also reflects on why he tends to step into difficult situations — from Chipotle’s crisis to Starbucks’ reset — and what it takes to lead a company through moments of pressure. |

|

Microsoft’s options to rein in OpenAI |

Microsoft Vice Chair Brad Smith and OpenAI CEO Sam Altman. Jonathan Ernst/Reuters. Microsoft Vice Chair Brad Smith and OpenAI CEO Sam Altman. Jonathan Ernst/Reuters.As a cub reporter at The Wall Street Journal, I learned an important lesson: Anyone can threaten a lawsuit. Things get serious when people actually file them. Still, Microsoft’s threats to sue OpenAI — scooped by the FT — signify an escalating fight that won’t end particularly well if the two sides can’t hash out a deal. At issue is who’s allowed to sell OpenAI’s tokens and how. As it stands, Microsoft is the only cloud provider allowed to sell OpenAI’s model as an “API,” which is kind of like having the ability to order à la carte, rather than be forced to eat a family-style meal. With massive demand for OpenAI models, the company also wants to sell through Amazon Web Services, and has, Microsoft alleges, tried to find loopholes to avoid its exclusivity agreement with Microsoft. Microsoft has threatened to sue if necessary to stop that. But here’s the question everyone should be asking: Why is exclusivity so important to Microsoft? It could just as easily say to OpenAI, “If you want to sell API access through AWS, give us a cut.” It shows that, even in the age of AI, with a rapidly expanding pie, the tech giants are still thinking a lot about “lock-in.” In other words, how can I make sure people have to use my service, even if they might otherwise choose a different one? This is a dangerous way of thinking: Tactics that worked in the pre-AI universe are being overwhelmed by the sheer force of rapid disruption that AI is causing. — Reed Albergotti |

|

Children and chatbots unite rivals |

Randi Weingarten speaking at Semafor’s State of Happiness event. Kris Tripplaar/Semafor. Randi Weingarten speaking at Semafor’s State of Happiness event. Kris Tripplaar/Semafor.The issue of children having access to chatbots is uniting even the unlikeliest of groups. Moms for Liberty, the political organization trying to get race and LGBTQ themes out of schools, has aligned with one of America’s largest teacher’s unions. The two groups “haven’t gotten along on almost anything,” Randi Weingarten, president of the American Federation of Teachers, said at Semafor’s State of Happiness event Thursday. “We’re together on this because we’re seeing what is happening to our kids, and it really is us against [the] money.” Meanwhile, lawmakers have failed to pass any meaningful regulation around online safety. At the event, Rep. Kat Cammack, a Republican from Florida, highlighted the standoff between the House and Senate on online safety and tech regulation, with House members looking for insight into the algorithms, and the Senate seeking control over how they are built. She joked, “In the House, we always say, ‘the other party is the opposition, but the Senate is the enemy.’” “There’s going to have to be a tremendous amount of work done to even get us to a space where we can get in the room and hash out the differences,” she said. |

|

Humanoid robots being assembled in Beijing. Maxim Shemetov/Reuters. Humanoid robots being assembled in Beijing. Maxim Shemetov/Reuters.AI’s explosive stage could be beginning. Computing pioneer I.J. Good said in 1965 that when intelligent machines could design even more intelligent ones, there would “be an ‘intelligence explosion,’” and the first powerful AI would be “the last invention” humanity makes. Some researchers think such “recursive self-improvement” is here; Anthropic, Google DeepMind, and OpenAI all report that much of the code used to improve their models is AI-written; staff at Anthropic told Time that full research automation could be a year away, and recursive self-improvement is “not a future phenomenon [but] a present phenomenon.” Political institutions are not ready for the pace of change that could mean, Transformer wrote: Imagine enormous breakthroughs “not every few months, but… every few days.” |

|

|