|

Superintelligence is already here, today

It's going to revolutionize science. It also might take control of this planet.

People argue back and forth about when artificial superintelligence will arrive. The truth is that it’s already here.

Go back a hundred years, and the popular notion of “intelligence” would probably include things like calculating speed and memorization. Then we invented computers, which could memorize and recall infinitely more things than we could, and do calculations infinitely faster. But we didn’t want to call those capabilities “intelligence”, because we recognized that although they were very powerful, they were very narrow. So we started to use the word “intelligence” to refer to the things machines still couldn’t do — various forms of pattern-matching, logical reasoning, communicating through natural language, and so on.

Even before the invention of AI, though, computers were already participating in frontier research. The four-color theorem is a famously hard math problem that stumped humans until the 1970s, when some mathematicians used a computer to prove it. The humans figured out that the theorem could be proven by brute force, just by checking a very large number of cases. So the computer did a mental task that humans couldn’t, and the result was a scientific breakthrough.

In the 2020s, we invented computer systems that could do most of the kinds of cognitive tasks that previously only humans could do. They can read, understand, and speak in human language. They can do mathematics, which is really just a language with very formal rules (this means they can also do theoretical physics). They can recognize complex patterns of knowledge embedded in written text, and apply those patterns to produce actionable insights. They can write software, because software is also just a language with formal rules. It turns out that all computers really needed in order to do all of this stuff was A) statistical regressions to identify patterns probabilistically, and B) a very large amount of computing power.

This doesn’t mean that AI can now do everything a human being can do. Its intelligence is “jagged” — there are still some things humans are better at. But this is also true of human beings’ advantages over animals. Did you know that chimps are better than humans at game theory and have better working memory? My rabbit can distinguish sounds much more sensitively than I can. If we were capable of creating business contracts with chimps and rabbits, we might even pay them for these services. Similarly, AI might not take all of humans’ jobs. But no one in the world thinks that chimps’ and rabbits’ superiority on a narrow set of cognitive tasks means that humans “aren’t truly intelligent”. We are jagged general intelligences as well.

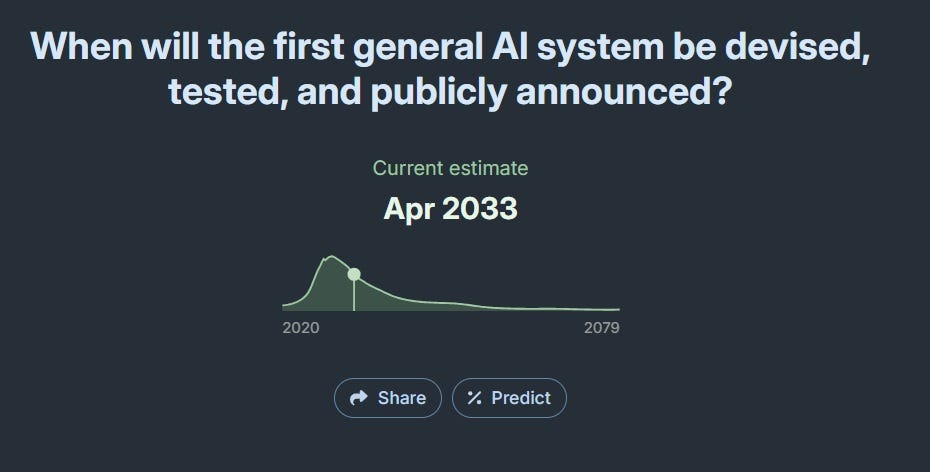

Most of the benchmarks that aim to measure whether we’ve achieved “AGI” — things like ARC-AGI and Humanity’s Last Exam — focus on the kinds of things that computers couldn’t do in 2021 — things that gave humans our irreplaceable cognitive edge before AI came along, and made us highly complementary to computers. And most of the discussion around “AGI” is about when AI will surpass humans at everything. For example, Metaculus forecasters still think AGI is in the future:

|

This may be the most important question from an economic standpoint — i.e., whether we expect AI to replace human jobs or augment them. But if what we’re talking about is domination of the planet’s resources, and control of the destiny of life on Earth, we don’t actually need AI to be better at every cognitive task. Humans conquered the planet from animals despite having worse short-term memories than chimps and being worse at differentiating sounds than rabbits.

In fact, I bet that if AI had A) permanent autonomy and long-term memory, B) highly capable robots, and C) end-to-end automation of the AI production chain, it could defeat humans and take control of Earth today. I might be wrong about that, but if so, I doubt I’ll be wrong three or four years from now. In any case, if we decide we don’t want to hand over control of the planet to an alien intelligence, we should think about restricting either A) full autonomy, B) robots, and/or C) full automation of the AI production chain.¹

That’s a sidetrack from my real point, though. My real point here is that AI, as it exists today, is already superintelligent. The reason is that AI can already do language and concepts and pattern recognition well enough, while also being able to do all the superhuman, fantastic, incredibly powerful things that a computer could do in 2021.

Right now, today, AI can do mental tasks that no human can do. In a few minutes, it can read an entire scientific literature, and extract many of the basic conclusions and insights from that literature. No human can do that. A single human can be an expert in one or two complex subjects; an AI can be an expert in all of them at once. A human needs to eat and sleep and take breaks; an AI agent can work tirelessly at proving a theorem or writing code. And AI can prove theorems and write code — or write paragraphs of text — much, much faster than any human.

These are all superhuman cognitive capabilities. They go far, far beyond anything that even the smartest human being can do. They are the result of combining the roughly human-level language ability, pattern recognition, and conceptual analysis of an LLM with the pre-2022 superhuman memory, speed, and processing power.

I don’t want to get sidetracked here, but I think there’s a nonzero chance that AI never gets much better than humans at most of the things that humans were better than computers at in 2021. It seems possible that humans are simply incredibly specialized in a few types of cognitive tasks — extracting patterns from sparse data, synthesizing various patterns into “intuition” and “judgement”, and communicating those patterns in language — and that we’ve basically approached the theoretical maximum in those narrow areas.

That would explain why AI has gotten much better at things like math and coding and forecasting over the last year, but why the basic chatbot interface doesn’t seem much more “intelligent”. It would also explain why when you talk to Terence Tao about math, it’s like talking to a superhuman, but when you talk to him about where to get lunch or which movies are the best, he’ll just sound like a fairly smart normal dude. AI will eventually get better than Tao at math, because it’s a computer, and computers are inherently good at math — but it may never get much better than the most thoughtful, eloquent humans at deciding where to get lunch or recommending movies. It may simply not be mathematically possible to get much better than we already are at that sort of thing.

In fact, this is what AI is basically like in Star Trek: The Next Generation, my favorite science fiction show of all time — and the one that I think best predicted modern AI. The show has two types of AGI — the ship’s computer, which eventually creates superhuman sentience via the Holodeck, and Data, an android built to simulate human intelligence. Both the ship’s computer and Data are approximately human-equivalent when it comes to taste, judgement, intuition, and conversational ability. But they are far superior when it comes to math, scientific modeling, and so on.²

It makes sense that the big differentiator between humans and AI would not be superior taste, judgement, and intuition, but things like computation speed and memory. Those are things humans are especially weak at, because we have very limited room in our little organic brains. It makes sense that humans would evolve to specialize in the type of thing we could get maximum leverage out of — recognizing and communicating patterns embedded in sparse data. And it makes sense that when we started automating cognitive tasks, we started out by going for the things we were weakest at, because those had the greatest marginal benefit.

In other words, the advent of LLMs, reasoning chains, and agents may simply be a “last mile” event in terms of creating superhuman intelligence — filling in an essential gap that humans were previously specialized to fill. The biggest marginal gains of AI over human brains may always come from the pieces we already had in place before 2022 — the ability to scan a whole corpus of literature in seconds, to perform computations at lightning speed, and to hold vast amounts of information