Hi there,

Most developers review AI code the same way they review their own.

You scan top to bottom. The variables look right. The structure looks clean. You skim past the parts that look familiar because they look familiar. And the bug ships.

The reason this fails is that you're reviewing code that was never typed. AI doesn't mistype a variable name. The bugs sit a layer above syntax: wrong claims, missing verification, broken traces.

Claim → Verify → Trace

Three words. Works on any block, in any language, today.

For each meaningful chunk of AI code, do this.

Claim. State out loud what this block claims to do. One sentence. "This validates the email format before storing it." If you can't state the claim, you don't understand the code well enough to approve it. That's the signal to slow down.

Verify. Check that the implementation actually does what the claim says. Not that it looks like it does. That it does. Does it actually validate the format, or does it just check the string isn't empty?

Trace. Follow what happens when the input is wrong. What if the input is null? What if it's malformed? Does the error get handled, or swallowed? The trace step is what catches bugs that pass tests but break in production.

That's it. Three steps per block. Takes about a minute once you're used to it.

Here's what it looks like on real code.

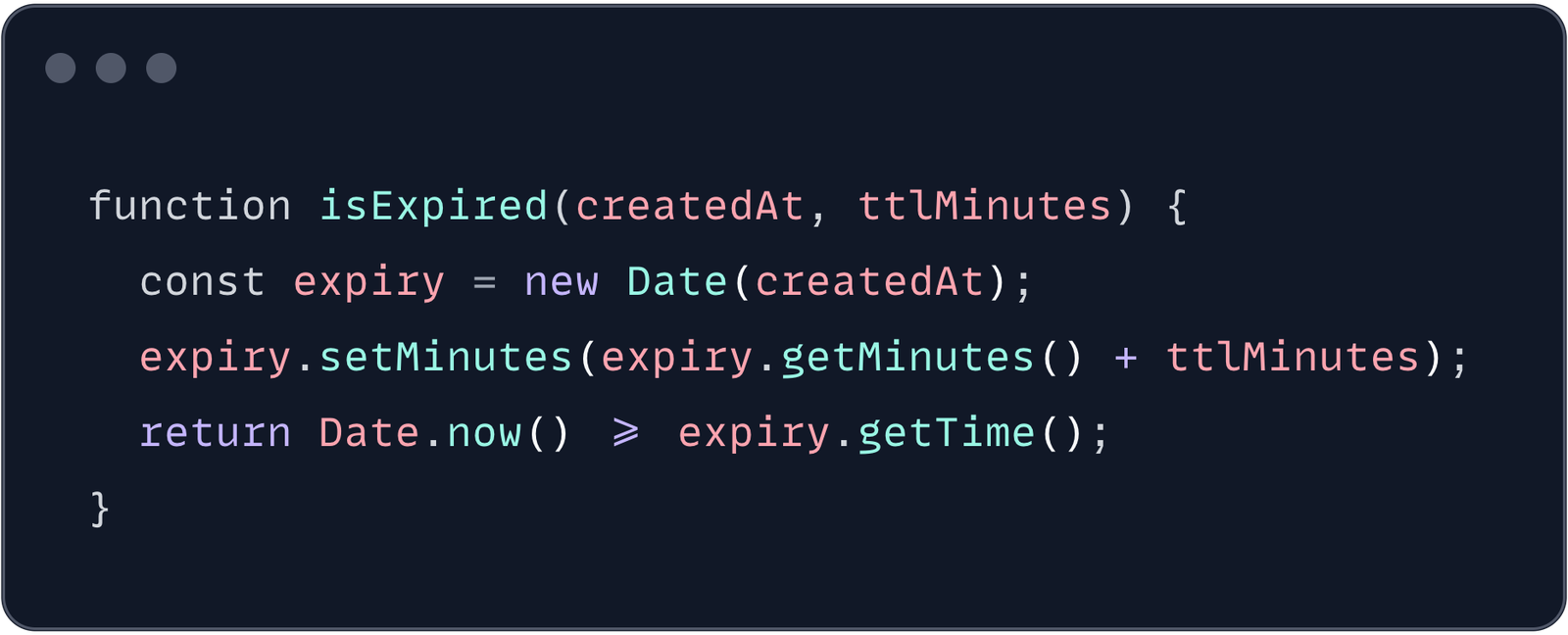

Say AI generates a five-line function.

Looks clean. You'd skim past it.

Now run the method.

Claim. "This returns true if a record is past its expiry.”

Verify. Does it? It adds the TTL to the creation time and checks if now is past it. The logic is right. But look at what it trusts. new Date(createdAt) accepts whatever you hand it. If createdAt is a string like "2026-05-06 14:30:00" with no timezone, the Date constructor parses it as local time. If your server runs in UTC and the timestamp was generated in CET, you've just introduced a one or two hour gap. The claim said past its expiry. The code says past its expiry, assuming the input is unambiguous. It isn't.

Trace. What if createdAt is null? new Date(null) is January 1st 1970. Add 15 minutes. The function returns true for every record with a missing timestamp. Silent. No error thrown. You only catch this when it breaks in production.

Two real bugs in five lines that looked completely clean.

Reading top to bottom lets your eyes do the work. Claim → Verify → Trace forces your judgment into the loop. You can't autocomplete your way through it. Every block makes you stop and decide whether the code matches the intent.

Try it on the next AI-generated block you review. Pick one block of code. State the claim out loud. Verify the implementation. Trace a wrong input.

Most developers find at least one thing they'd want to fix on the first try. Some find the bug they've been chasing for two days.

This is what we do at Unlearn. Practical methods for working with AI code, the kind you can actually use right away.

Best,

Alex GS

Tech Education Lead