|

Almost Timely News: 🗞️ Advancing Up the 5 Levels of AI (2026-04-19)

Don't reach for something that isn't there

Almost Timely News: 🗞️ Advancing Up the 5 Levels of AI (2026-04-19) :: View in Browser

The Big Plugs

So many new things!

3️⃣ A free 25 minute webinar Katie and I did on GEO - even though it says the date is past, it still works and takes you to the recording.

Content Authenticity Statement

100% of this week’s newsletter content was originated by me, the human. You’ll see me working with Gemini and Claude in the video version. Learn why this kind of disclosure is a good idea and might be required for anyone doing business in any capacity with the EU in the near future.

Watch This Newsletter On YouTube 📺

Click here for the video 📺 version of this newsletter on YouTube »

Click here for an MP3 audio 🎧 only version »

What’s On My Mind: Advancing Up the 5 Levels of AI

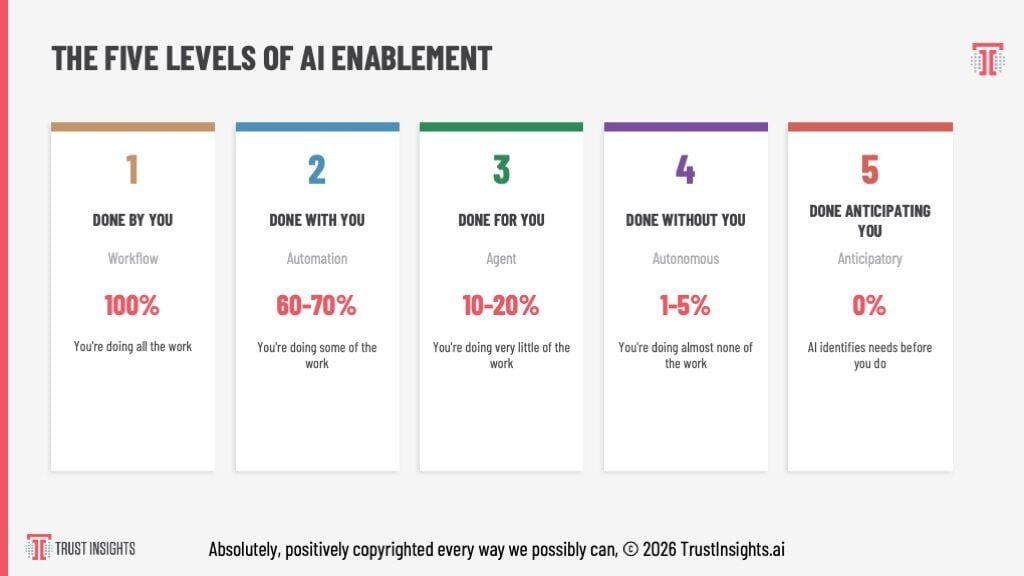

Last month, I shared with you my new framework for understanding where we are as individuals and organizations when it came to AI enablement - what we can be doing with AI. If you missed that issue, you can find it here. This has formed the basis of my recent keynotes, and this week, I’ll take you through how you bring it to life.

Frameworks are great, but frameworks that contain clear, prescriptive actions are even better.

To get where we want to go, we should think about where we’ve been.

Part 1: A Brief, Incomplete History of AI

AI has been around in some form since the 1950s, though only in the last 40 years have we had enough computational power to make it useful. Prior to 2017, most AI was what we now call classical AI or machine learning - lots and lots of math and probability in two main categories, regression and classification.

Regression is, given an outcome, either predicting more of that outcome or explaining how we got to that outcome. If you’ve ever done lead scoring or attribution analysis, that’s regression analysis.

Classification is organizing your data. Anyone who’s used email spam filters since 1999 has used classification AI in some capacity, even if you didn’t know it.

The challenge with these two branches of AI is that the mechanisms to make them work required a tremendous amount of code and statistics. If you didn’t know R or Python or Scala or Julia, your ability to derive significant benefit from them was low.

In 2017, Google DeepMind created an architecture called transformers - no relationship to the 1980s toy line, unfortunately - that was intended as translation software for language. Given enough data to train on and a novel mechanism that took into account all the data in an input, and recursively processed that data with every new input, the transformers architecture achieved totally new capabilities.

Fast forward 5 years, and OpenAI released a very powerful (at the time) model called GPT-3.5 and a web interface to it, ChatGPT, starting what we now know as the generative AI revolution. GPT-3.5 was reasonably powerful, with a short term memory of 8192 tokens, or roughly about 5,000 words; users could interact with it and generate outputs up to 3,000 words.

But very quickly, folks realized that starting a new conversation with it meant literally starting over. It remembered nothing, and its background knowledge - 175 billion parameters - contained a very incomplete knowledge of the world. More important, its memory was probability-based. It returned answers that matched high probabilities, not factual correctness. Back then, as late as 2021 and early 2022, you could ask a GPT-series model a question like “Who was president of the United States in 1492” and it would answer - quite confidently - that it was Christopher Columbus.

Why did it do that? Because when it breaks down that question into probabilities, it looks at the probabilities of different pieces in it, such as the United States as an entity, the president being an important role, 1492 being an important date, and probabilistically, the name Christopher Columbus is the highest probability answer, even though it’s factually wrong. Christopher Columbus as a name is associated with America and with Fourteen Ninety Two from a probability perspective.

Those two missing features - a relatively small short term memory (what we call the context window) and a probability-based long term memory - are what severely limited the usefulness of generative AI in its early days.

Tech companies quickly realized that if AI models continued being solely probability-based, they would never be broadly adopted, so virtually all of them adopted a fact-checking system grounded in web search, which gave birth to what we now called GEO/AEO/AIO/etc. AI models by default now in consumer-facing interfaces use web search to validate what they’re doing and what answers they give.

Those limitations - no persistent memory, a temporary short term memory, and a long term memory based on probability combined with web search - are where most consumer-facing AI systems are today. Whether it’s ChatGPT, Copilot, Gemini, Claude, etc., the basic features are all the same, which brings us to the Five Levels.

Part 2: A Very Fast Recap of the Five Levels

If you skipped the previous issue of the newsletter that talked about the five levels in depth, here is the TLDR version, based on an old product-market fit framework:

Level 1: Done by you. You’re doing virtually all the work.

Level 2: Done with you. You’re doing about half the work.