|

OpenClaw You Can Trust (Sponsored)

When your AI agent holds your API keys, reads your email, and runs shell commands, security isn’t optional.

KiloClaw is a fully managed OpenClaw: a one-click deploy that gives you a 24/7 AI agent, without buying a Mac Mini.

Every instance runs in a dedicated Firecracker micro-VM, not a shared container, with five independent isolation layers protecting your data. An independent security assessment found zero cross-tenant vulnerabilities (read the full white paper).

Built on the same infrastructure serving 1.5M+ Kilo Code developers, with access to 500+ AI models through Kilo Gateway.

Translating between 16 languages means supporting 256 possible pairs, such as Korean to English, French to Thai, Portuguese to Japanese, and so on. One solution is to build a separate model for each pair. However, Roblox decided to build just one.

Roblox is a global platform where more than 70 million people play, create, and socialize every day across more than 15 million active experiences. Users span 180 countries and communicate constantly through in-experience text chat. And a single unified model now handles real-time chat translation across all of those users, at roughly 100 milliseconds per translation and over 5,000 chats per second.

However, Roblox’s real engineering challenge wasn’t building a model that could translate. It was building a system that could translate at the speed of a conversation without breaking the user experience. In this article, we will look at what Roblox built and the trade-offs they made.

Disclaimer: This post is based on publicly shared details from the Roblox Engineering Team. Please comment if you notice any inaccuracies.

One Model Versus Many

Building a separate model for every language pair is the obvious starting point. One model for English to Korean, another for Korean to French, another for French to Thai, and so on.

With 16 languages, that’s 16 times 16, or 256 individual models. Each one needs its own training data, its own infrastructure, its own maintenance. And when Roblox adds a 17th language, they don’t need a new model. They need 32. The approach grows quadratically, and it collapses under its own weight long before you reach production.

Roblox went a different direction.

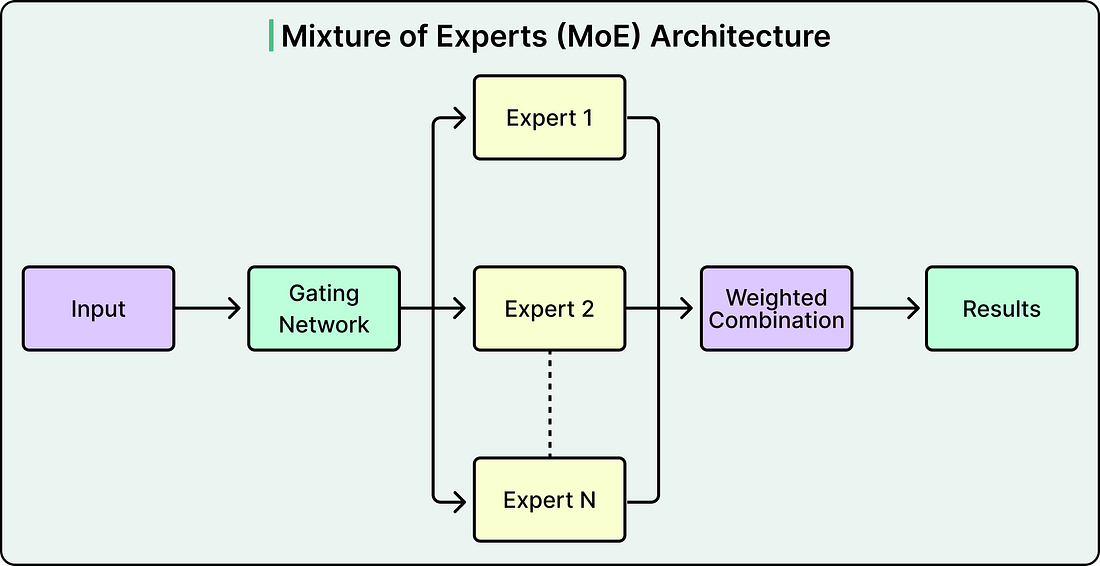

They built a single, unified transformer-based translation model that handles all 256 language directions. The key to making this work is an architecture called Mixture of Experts, or MoE. Instead of every translation request passing through every parameter in the model, a routing mechanism activates only a subset of specialized “expert” subnetworks depending on the input.

Different experts specialize in groups of similar languages. Given a source sentence and a target language, the system activates the relevant expert (or combination of experts) to generate the translation. Think of it as a team of specialist translators sitting behind a single reception desk. A request comes in, the routing layer sends it to the right specialist, and only that specialist does the work. The full team has broad expertise, but any single translation only activates a fraction of it.

This unified approach creates some great benefits. When all languages are trained together, similar languages actually help each other. For example, Spanish and Portuguese share enough structure that training them in the same model improves translation quality for both. The model also learns enough about each language’s patterns that it can auto-detect the source language, even when the language setting is wrong or missing. It can even handle mixed-language input, where someone types in two languages within the same message, and still produce a reasonable translation into the target language.

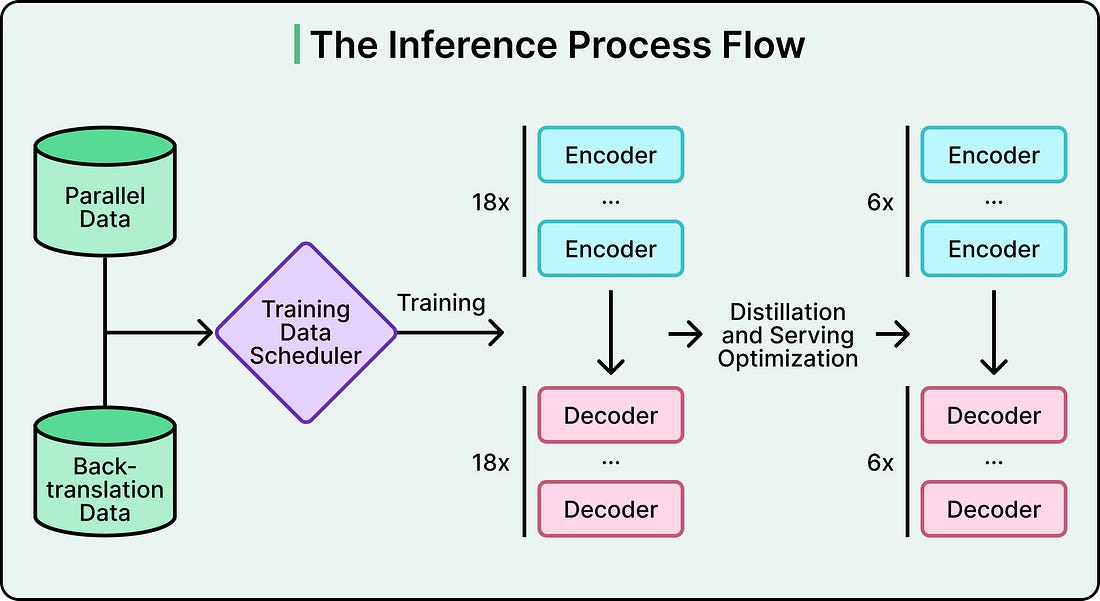

However, there’s a cost to consolidation. One model now carries the weight of all 256 directions. To handle that diversity with acceptable quality, Roblox’s model ended up with roughly 1 billion parameters. Running inference through a model that large is too slow and too expensive for real-time chat at scale. The architectural problem was solved, but the serving problem was just getting started.