|

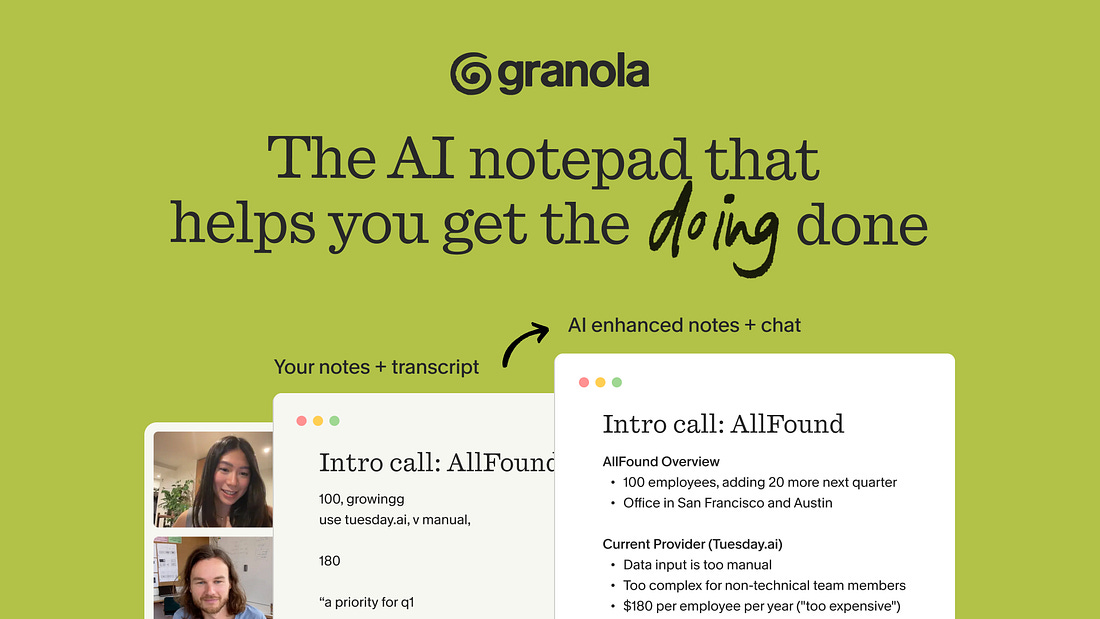

The AI notepad for people in back-to-back meetings

Most AI note-takers just transcribe what was said and send you a summary after the call.

Granola is an AI notepad. And that difference matters.

You start with a clean, simple notepad. You jot down what matters to you and, in the background, Granola transcribes the meeting.

When the meeting ends, Granola uses your notes to generate clearer summaries, action items, and next steps, all from your point of view.

Then comes the powerful part: you can chat with your notes. Use Recipes (pre-made prompts) to write follow-up emails, pull out decisions, prep for your next meeting, or turn conversations into real work in seconds.

Think of it as a super-smart notes app that actually understands your meetings.

Free 1 month with the code SCOOP

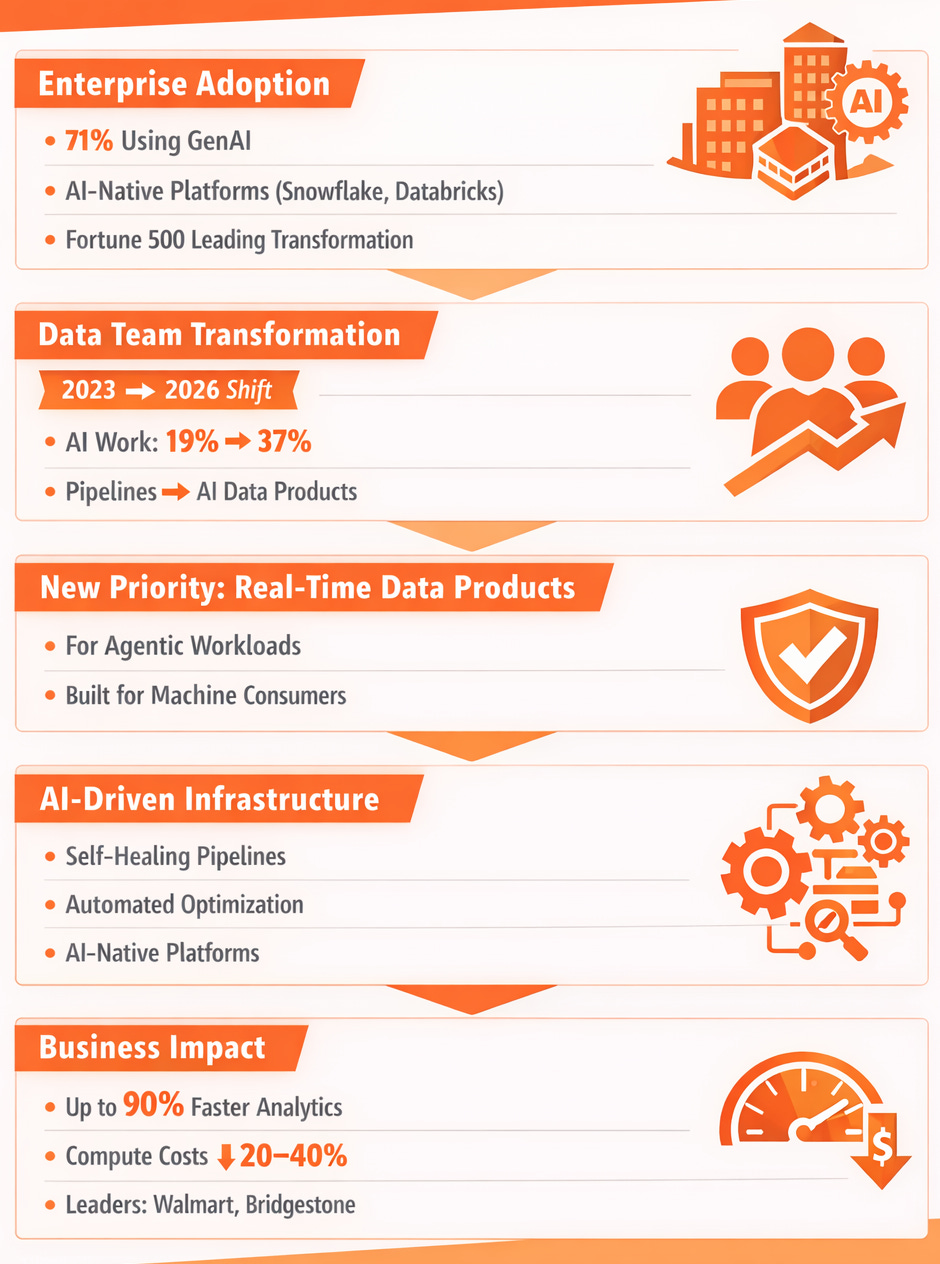

AI is no longer an augmentation layer in data engineering—it’s becoming the operating system. Fortune 500 companies like Walmart and Bridgestone are already seeing the upside: up to 90% faster analytics and 20–40% lower compute costs, driven by self-healing data pipelines and AI-native platforms like Snowflake and Databricks.

At this pace, the shift has become operational. Data teams now spend 37% of their time on AI-related work, nearly doubling from 19% in 2023. The priority has clearly moved: from building pipelines to delivering governed, real-time data products designed for agentic workloads. This comes as 71% of enterprises actively deploy GenAI across their stack.

Still, the climb remains steep for smaller organizations. The tooling is maturing fast—but the organizational readiness gap is real.

From Pipelines to AI-Native Data Products

Data engineering has outgrown its roots in manual ETL scripting. Today, it’s about designing AI-ready data products—systems that don’t just move data, but understand, validate, and optimize it in real time.

AI already automates over half of repetitive engineering tasks, from schema inference to pipeline optimization. The gains are tangible. Walmart, for example, unlocked $5.6 million in annual savings through Databricks-powered self-service analytics.

But this evolution mirrors a familiar pattern from software engineering. As code generation became cheap, the bottleneck shifted to verification. The same is happening now: the challenge is no longer throughput—it’s trust. Ensuring data is fresh, accurate, and context-aware enough for autonomous agents is the new frontier.

AI Across the Entire Data Lifecycle

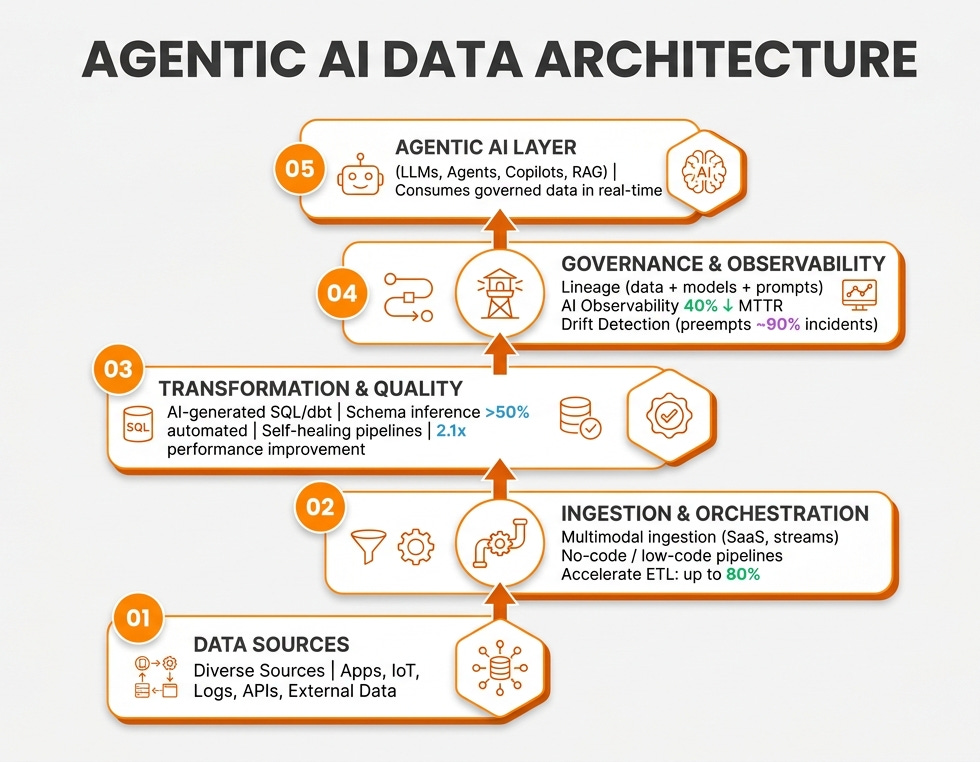

AI is embedding itself across every layer of the data stack, transforming brittle systems into adaptive, predictive platforms.

In ingestion and orchestration, agentic tools are eliminating the need for custom pipelines. Snowflake’s Openflow enables multimodal data ingestion—from SaaS tools to real-time streams—without manual coding. Databricks’ Lakeflow Designer is pushing ETL acceleration up to 80% for companies like Trek Bikes.

In transformation and data quality, self-healing systems are becoming the default. AI can now preempt up to 90% of pipeline incidents. Snowflake’s Cortex Copilot generates SQL and dbt transformations, while Monte Carlo’s drift detection dramatically reduces mean time to resolution.

In serving and governance, the stack is consolidating. Platforms like Databricks’ Unity Catalog and Microsoft Fabric unify lineage across data, models, and even prompts—an essential requirement in a world where agents, not humans, are the primary consumers of data.

Vendor Momentum and Real ROI

The competitive landscape is increasingly defined by AI depth—not just data capabilities.

Snowflake is pushing Cortex Copilot and Openflow to accelerate development cycles, helping enterprises like Nissan cut project timelines in half.

Databricks continues to lead with Lakeflow and Unity Catalog, powering Walmart’s 90% faster analytics and multimillion-dollar savings.

Google Cloud is reducing developer friction with Data Science Agents that cut context-switching in BigQuery workflows by over 50%.

AWS and Microsoft are competing on efficiency—delivering 30–50% inference cost reductions and up to 40% compute savings through automated optimization and drift correction.

Interestingly, more than half of Snowflake customers also use Databricks, signaling that hybrid architectures—not winner-take-all platforms—are becoming the norm.